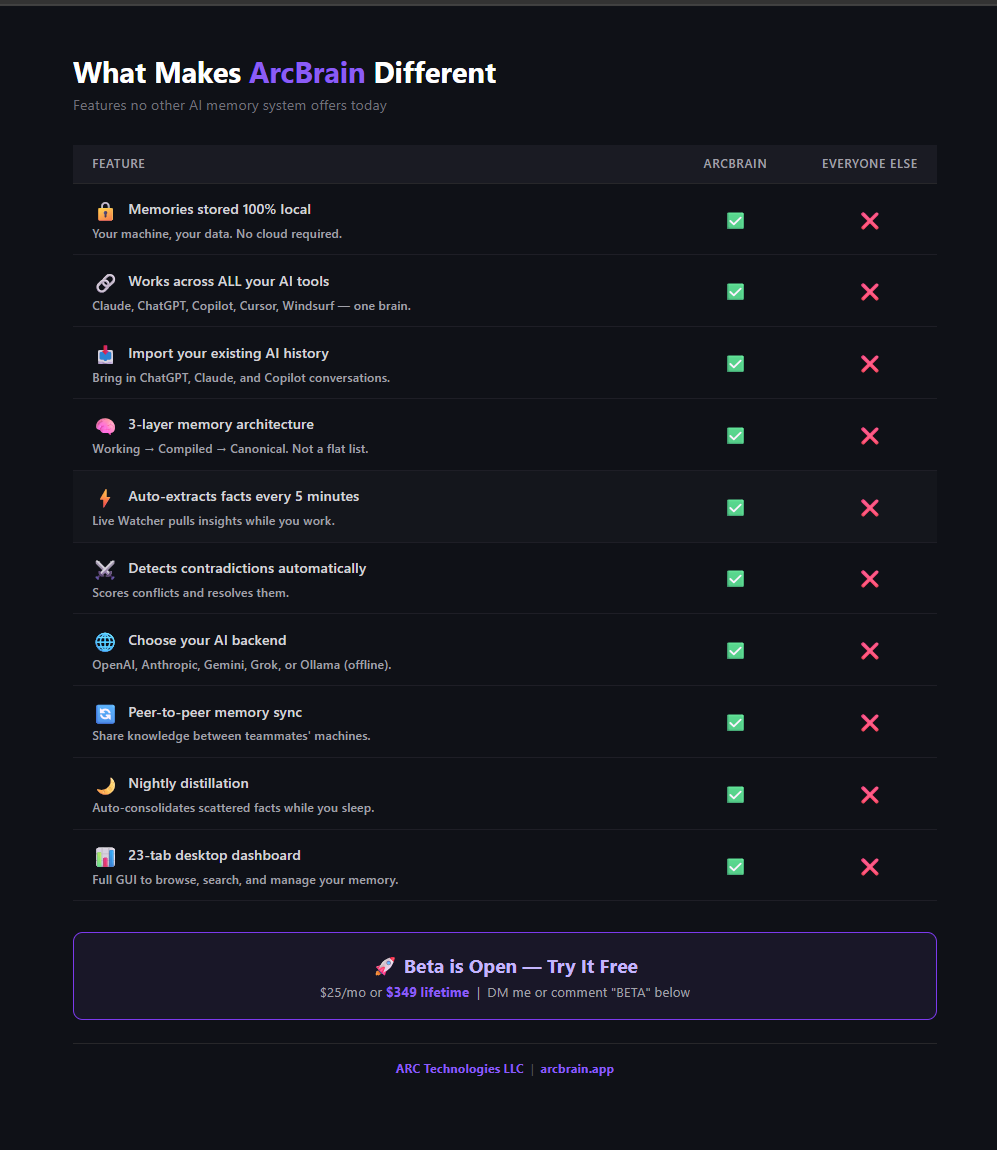

Your AI coding assistant

finally has a memory.

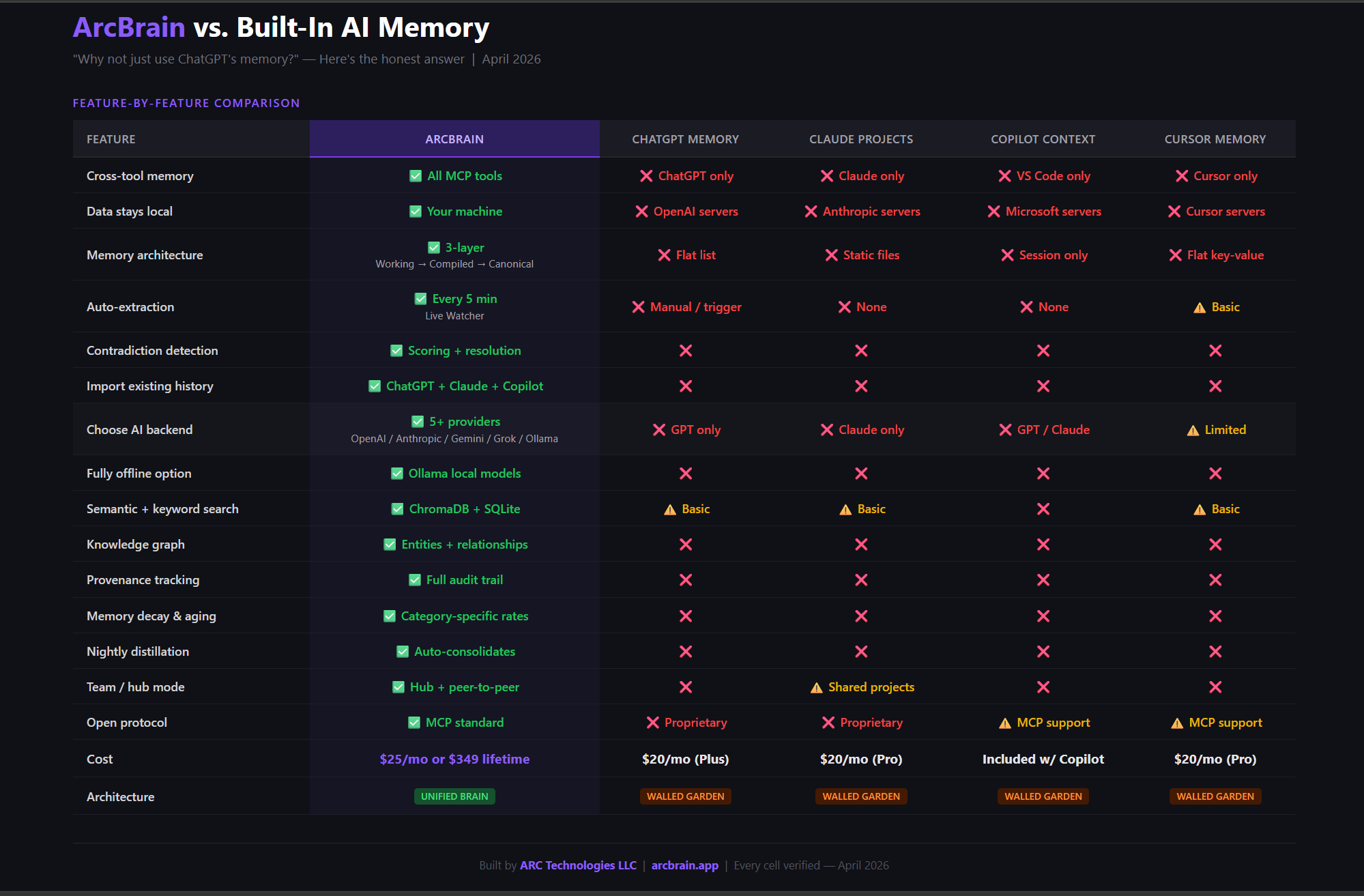

ArcBrain is a persistent memory layer for VS Code Copilot, Cursor, Claude Desktop, and Windsurf. Your decisions, configs, snippets, and context — remembered forever, across every session.